Content from 2017-06

Tags

Months

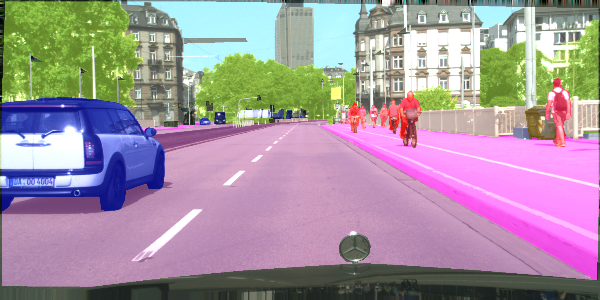

Semantic segmentation is a process of dividing an image into sets of pixels sharing similar properties and assigning one of the pre-defined labels to each of these sets. Or, in other words, you get a picture, and you're supposed to tell which pixels constitute a car and which constitute the pedestrians. Fun stuff.

This post is an update to the previous post discussing a newer version of TensorFlow.