Content from 2017-11

Tags

Months

AWS has recently introduced the P3 instances. They come with Tesla V100 GPUs, so I decided to run a little benchmark to see how well they perform compared to my workstation (GeForce 1080 Ti) when training neural networks.

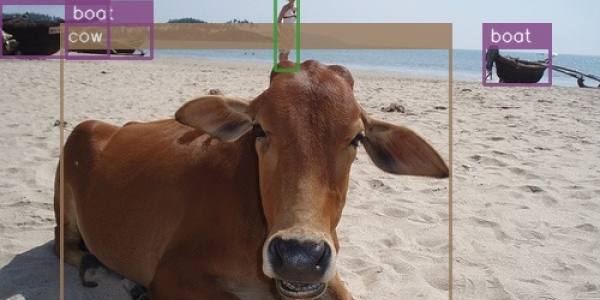

There was no understandable and straightforward implementation of SSD in TensorFlow, so I decided to make one. The original paper assumes familiarity with related research, so I needed to plow through several additional papers and a ton of source code to understand what's going on here. This post is an attempt to provide all that missing context in one place.